Pipeline — Step 4

The LLM reads the retrieved passages and generates a clear, natural-language answer. Every claim cites the exact source paragraph. If the answer isn't in your documents, it says so.

The Problem

The #1 reason businesses don't deploy AI for serious work: hallucination. Generic chatbots generate confident-sounding answers that are completely fabricated. In legal, healthcare, or compliance contexts, a wrong answer isn't just unhelpful — it's dangerous.

CorpusAI solves this by grounding every answer in your documents. The LLM is never asked to "know" anything. It's asked to synthesize the passages that were retrieved in Step 3.

The result: every claim maps to a source. Every source is verifiable. Every answer is trustworthy.

How It Works

Hybrid search finds the 8 most relevant chunks from your corpus.

Retrieved passages are injected as context into the LLM prompt.

The LLM synthesizes a coherent answer using only the provided context.

Every claim is tagged with its source document, page, and paragraph.

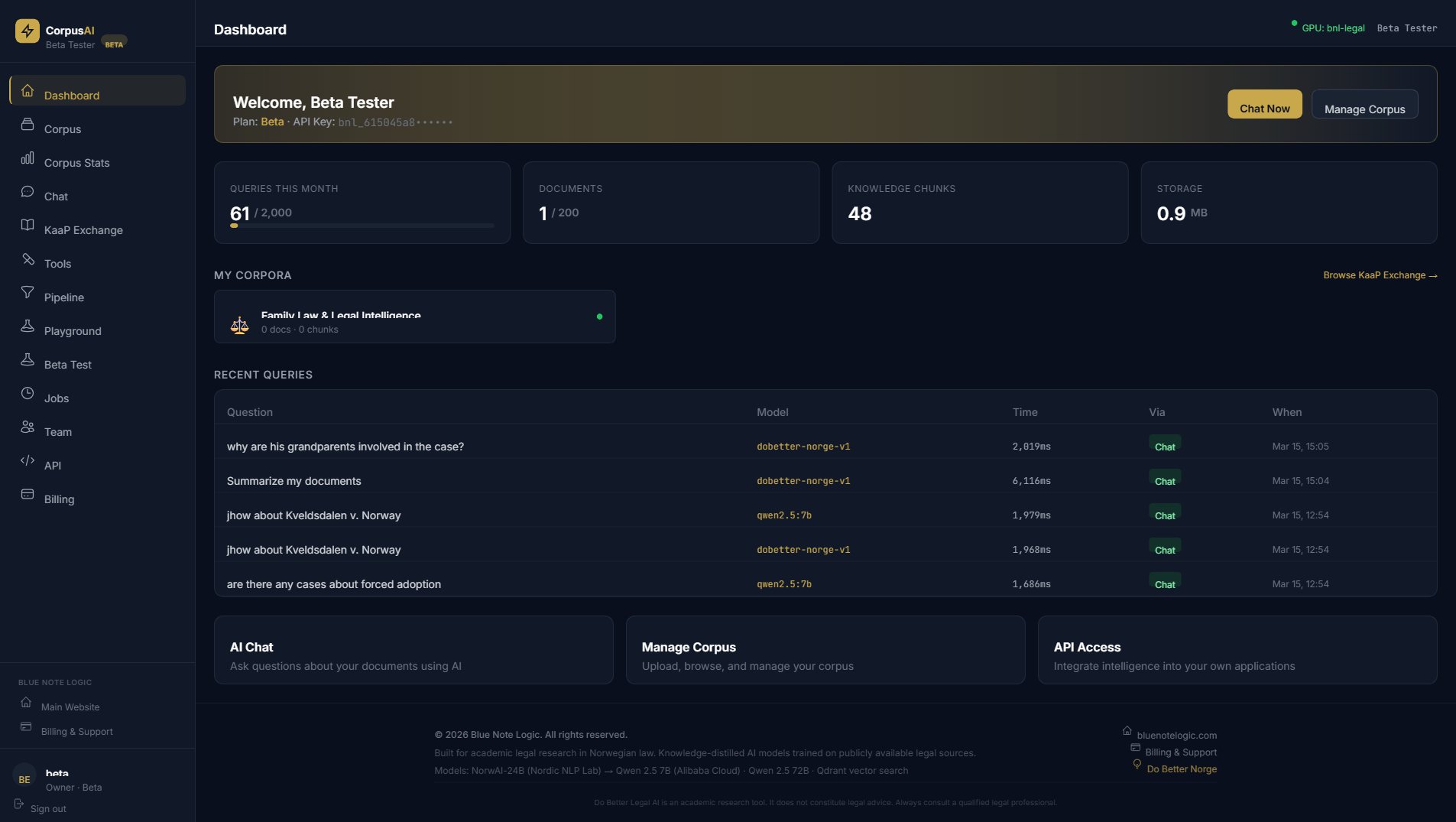

Full Visibility

The dashboard shows every question your team has asked, which model answered, response time, and which interface (Chat, API, MCP) was used. This isn't just logging — it's intelligence about how your organization uses AI.

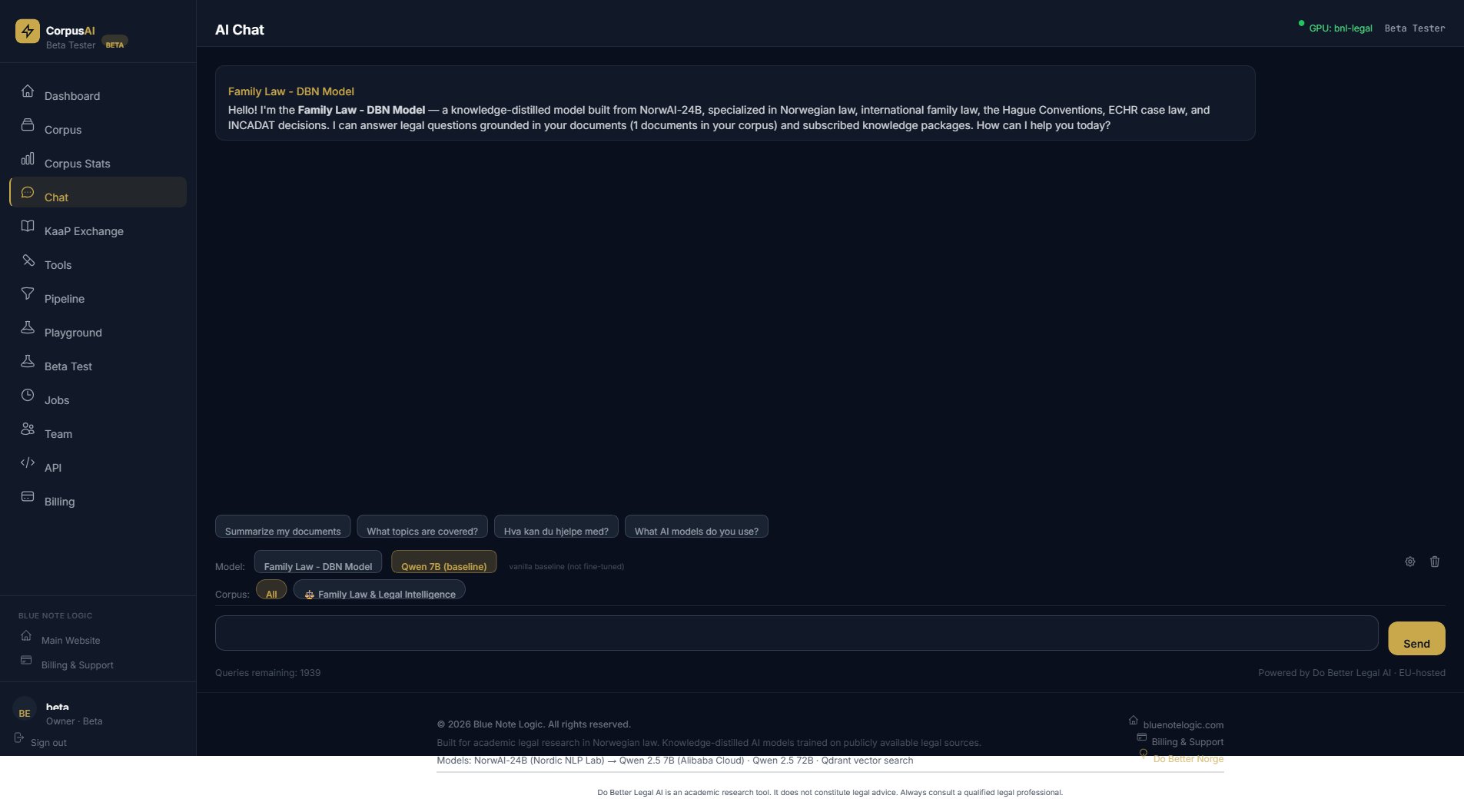

Your AI Models

Sandbox Plan

Fast document search and basic Q&A. Great for exploring what corpus intelligence can do.

Professional & Corporate

Deep reasoning, complex multi-step analysis. NorwAI-24B backbone with Qwen 2.5 inference. Running on NVIDIA RTX PRO 6000.

Sovereign Plan

A model trained on your corpus. Faster inference, deeper domain knowledge. You own the weights. Take them anywhere.

If the answer isn't in your documents, CorpusAI says "I don't have enough information to answer that." No fabrication. No confident nonsense. This is what separates document intelligence from a chatbot.

Upload your first document. Ask your first question. Get a cited answer in under 60 seconds. No credit card required.